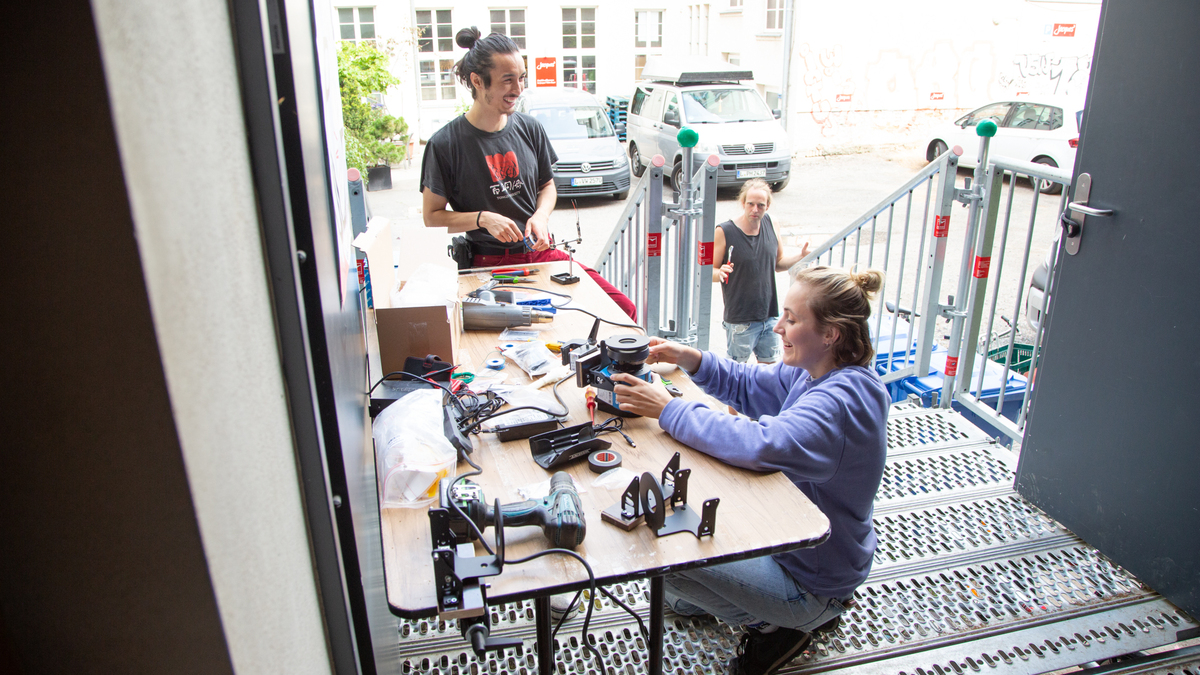

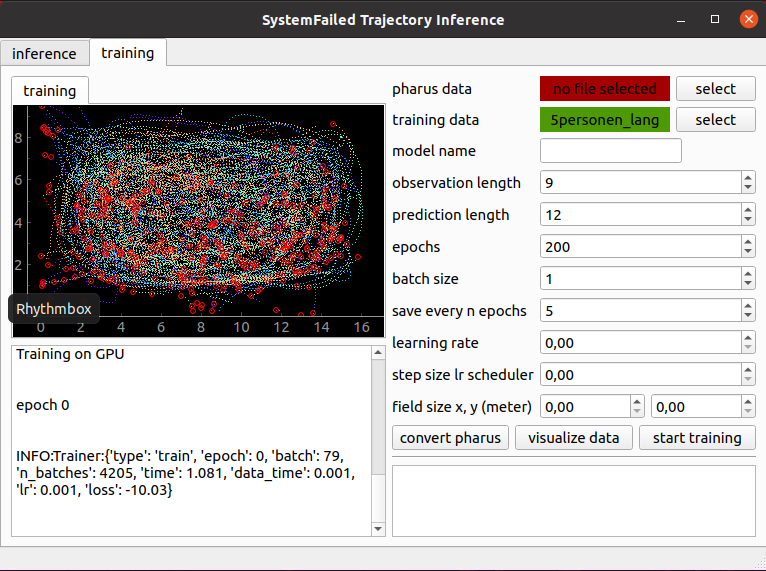

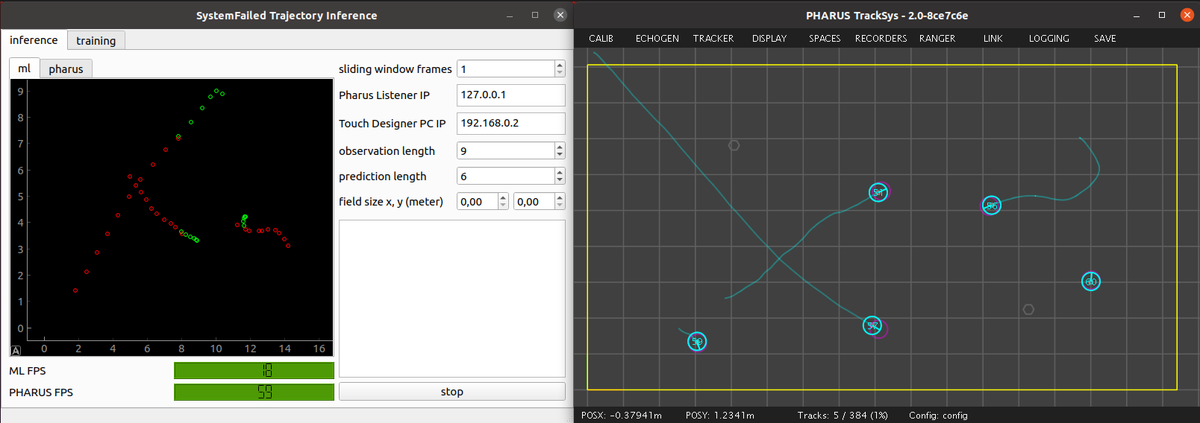

CODING - Die Nerds entwickeln das machinelle Lernsystem, die Steuersoftware für Licht und Projektionen und die geometrischen Berechnungen, um Bewegungen zu bewerten. Das maschinelle Lernen basiert auf dem Trajectory Forecasting Framework (TrajNet++). Der Algorithmus versucht das Bewegungsverhalten von Menschen in Gruppen vorraussagen. Die Softwareentwicklung kann auf GitHub verfolgt werden.